Agentic Design Patterns

At Devoxx France 2026 and JNation 2026, I had the pleasure of presenting a session on Agentic Design Patterns. In this talk, I explore how to move beyond basic LLM wrappers to build reliable, scalable, and sophisticated AI agent systems.

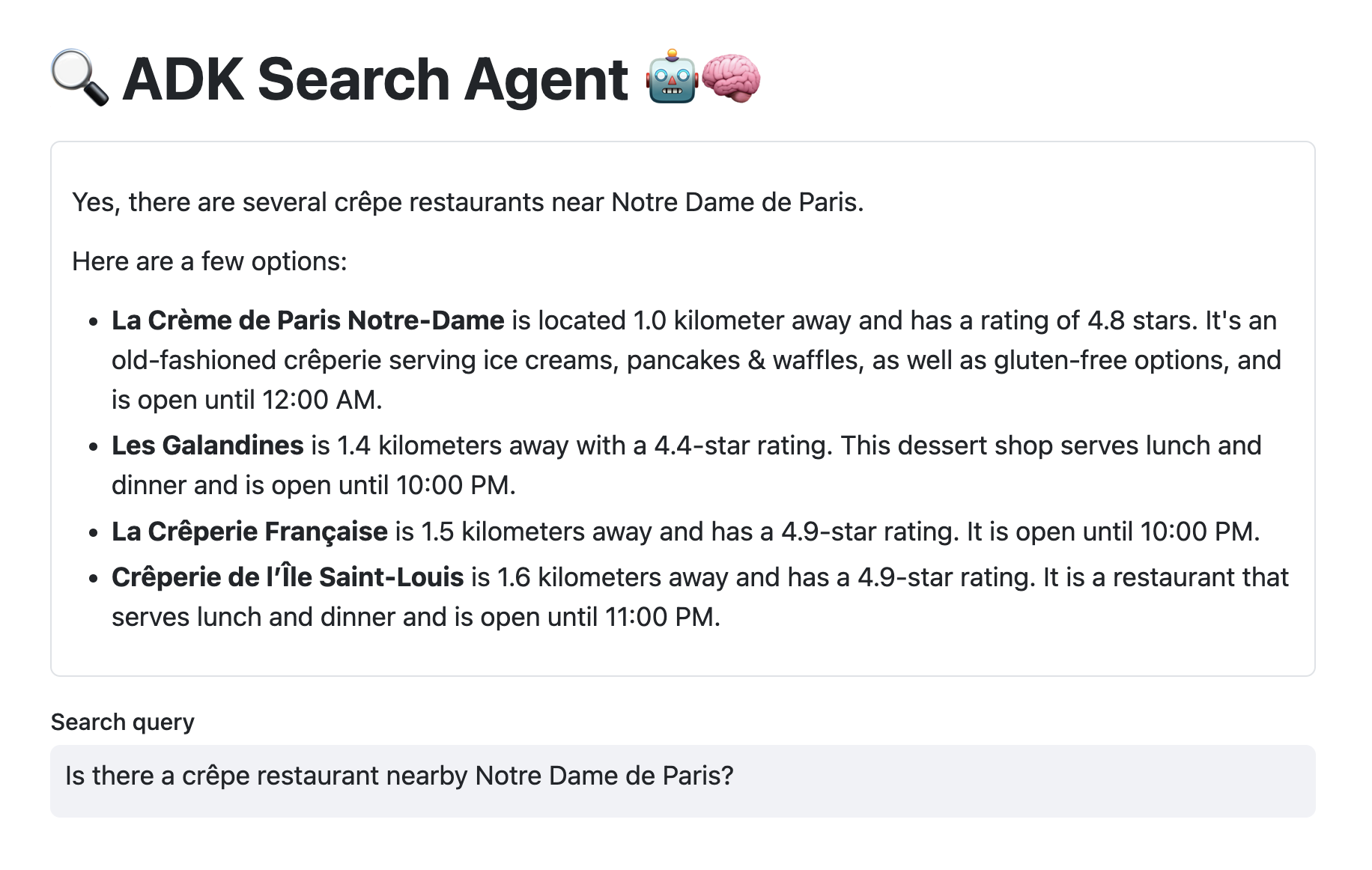

In the coming weeks, I’ll be blogging about some of these patterns, that I implemented using LangChain4j and ADK for Java.

Abstract

It’s time to dive into the deep end, far from “hello world” demos. To build your multi-agent systems, you often start by assembling classic bricks: sequential or parallel flows, or loops. The basics!

Read more...